Image uploaded

Smart Match detects all products and accessories in the frame of any image uploaded.

Making novel fashion images searchable, matchable, and trustworthy inside phia Lens.

"People use Google Lens for nearly 20 billion visual searches every month." — Google, 2024

Maya, 26 — urban, fashion-conscious, price-sensitive. Screenshots celebrity fits on Instagram.

"When I see something on social, find it — or close — in my budget, and show me why it matched."

Daily active users completing a tap-through to a retailer product page.

Why this metric: it's the single signal that captures the full value loop — user intent, AI trust, and retailer commercial outcome. One click proves all three at once.

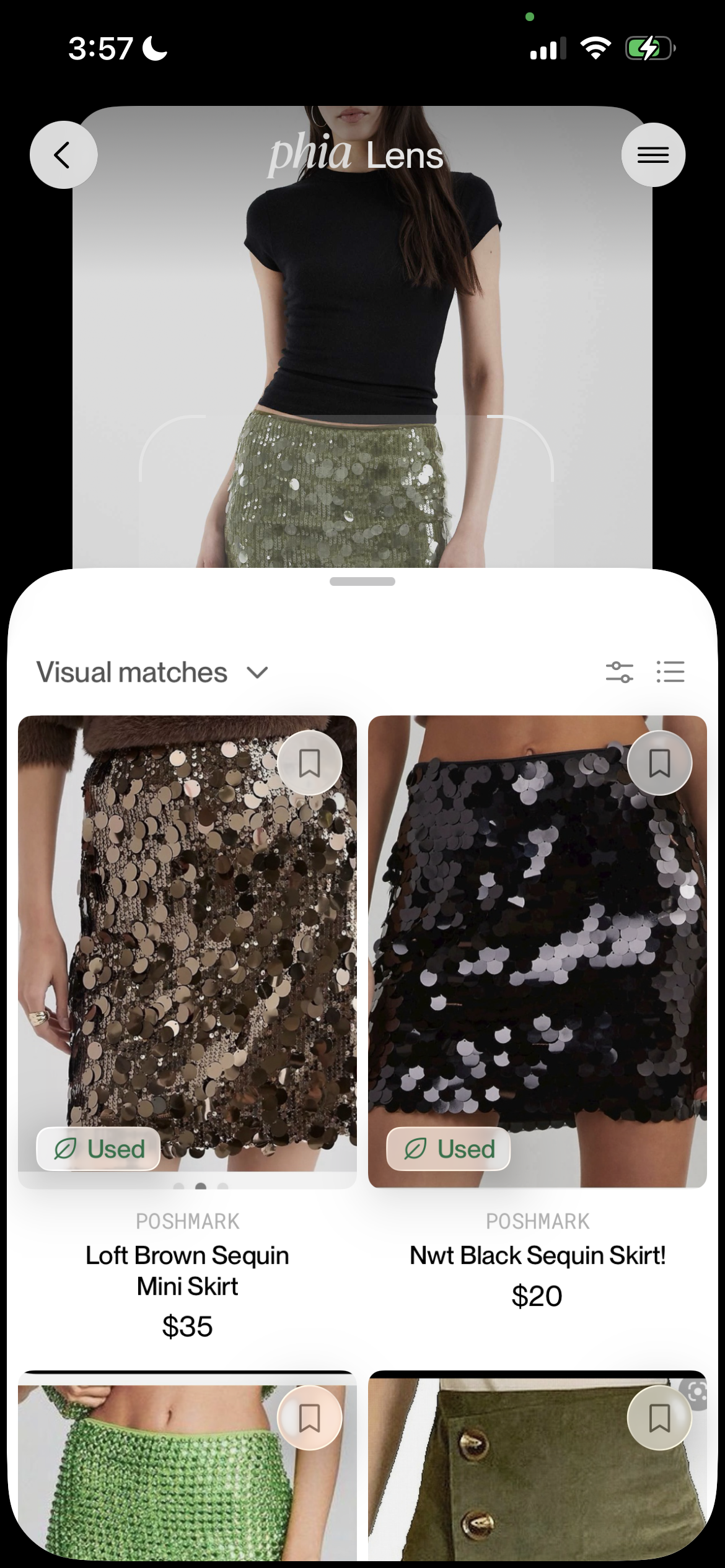

Olive green sequin mini skirt uploaded. Returns black and brown sequin skirts as top results, then green suede and denim skirts — color & key attribute match failed.

Classic Pinterest inspo image.Results are Blue floral wallpaper and wide-leg pants — the matcher ranked on a single attribute with no consistency.

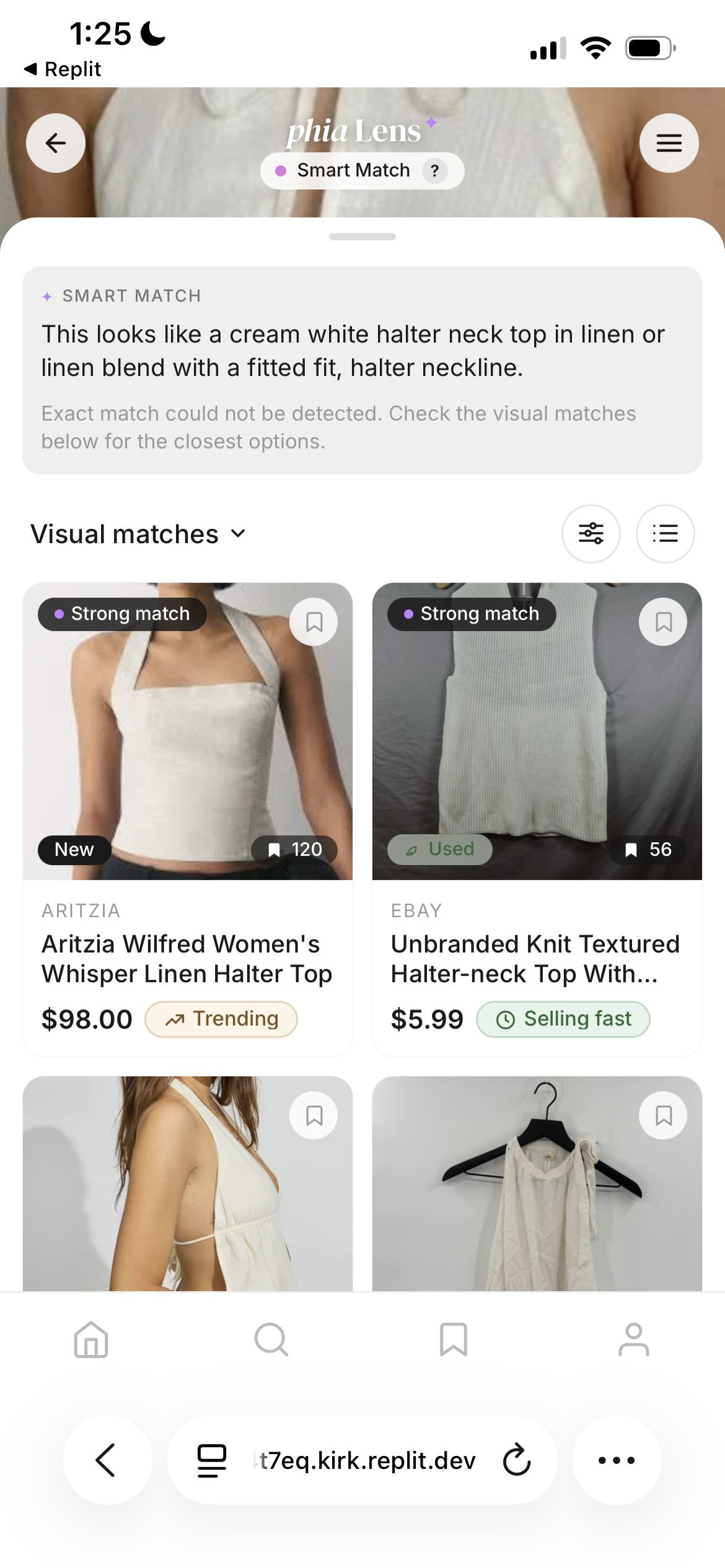

Returns tops with a close neckline match but wrong product style and attributes. No reasoning, no visibility into what the AI detected.

Repeatable across several uploads of different images. It's a consistent failure pattern and every fallback leaks a session to Google Lens.

Smart Match detects all products and accessories in the frame of any image uploaded.

VLM extracts fine-grained attributes per detected item into structured JSON — garment, color, silhouette, fabric, design details, and accessories.

Retrieval scoped per product type queried separately for cleaner, categorized results.

Weighted scoring, 6:4 New:Used render. Best matches first, per product type.

↗ Trending ⊘ Low stock ⏱ Selling fast — lifts purchase rate.

Per-card reasoning pill with AI context. Exact match surfaced at best price across when confirmed product is found.

The current system works when the image is already in Phia's catalog. It breaks on screenshots, paparazzi shots, and TikTok grabs — the fastest-growing image upload types.

Detects the product accurately with strong attribute context. No resale inventory, no demand signals, no price comparison.

Returns results on a 1:1 match. No trust signal, no demand context, no match visibility — reduces intent to purchase.

Demand signals, save counts, and urgency pills surfaced. Fine-grained attributes detected from all products per type, with exact match at best price.

Reverse image search on the open web.

Retailer catalog depth, Google-powered.

Visual discovery for style & vibe.

Price comparison and coupons.

Attributes + resale aggregation, on ANY image.

The category gap is attribute-precision × resale-aggregation. Phia is the only player positioned to own it.

~60% of 1M monthly users engage visual search. Prioritized as the entry point with highest leverage on the thesis.

Fallback on novel images is a trust-breaker on Phia's primary entry point. Chose this because it targets fundamental accuracy — not a UX refinement.

Rapid-prototyped with Replit Agent 4. Validated across Claude Sonnet 4.6, GPT-4o, and Gemini on 20+ uploads using SerpAPI Google Shopping for real product retrieval. Scored results showed real tier variance — the mechanism holds up before scale.

Scoped to contained Python scoring layered upstream of retrieval. No embedding-index rebuild — why I chose to ship additive, not replacive.

(600,000 × 3 × 0.80) / 2

When attributes resolve to a confirmed product, Smart Match surfaces the best price across 6,200+ partners — visual search becomes price comparison.

Tells the user why this card matched — exact color, garment, silhouette. Trust before price.

Three demand signals lift purchase rate on highest-intent sessions.

High save count signals desirability before price registers — the strongest pre-purchase intent indicator on the card.

Phia is AI commerce for high-intent shoppers. Retrieval finds the item. Demand signals sell it without rebuilding the catalog.

Wrong matches erode trust faster than right matches build it.

Guardrail — luxury price-plausibility rule, false-positive counter held below 3%.

VLM latency breaks the sub-2s UX budget.

Mitigation — cache by image hash, parallelize with catalog pre-fetch.